Common Google Search Console Errors: What They Mean & How to Fix Them

Google Search Console (previously Google Webmaster Tools) is a free platform that gives valuable insights about your website, allows you to update Google about your website changes, and informs you when Google’s crawl bot picks up any errors on your site that can impact usability and performance in the search results.

These crawl errors are often misunderstood and can intimidate webmasters and marketers when they’re issued by Search Console… and rightfully so. Talk of preventing your site from appearing in Google’s search results is certainly enough to scare a site owner, especially if that’s where a large portion of the site’s traffic comes from. The vast majority of the time however, the issue at hand is minor and isn’t something that’s going to tank your rankings or take you out of the search results.

Below, I will highlight some of the most common errors Search Console finds and break down what they mean and how to resolve them.

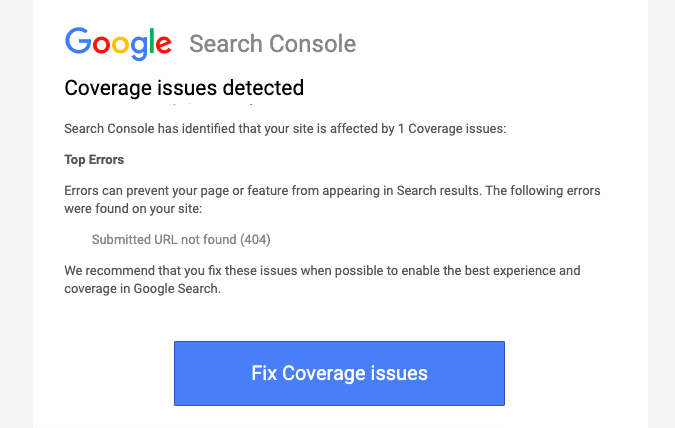

Coverage Issues

Submitted URL not found (404)

This is probably the most common coverage issue you’ll see from Search Console. It means that a page that was submitted to Google is returning a 404 status code. This usually occurs when a page is deleted from a site but isn’t deleted from the sitemap. Sitemap WordPress plugins such as Yoast will typically delete them from the sitemap automatically, but for non-Wordpress sites, the removal of the page from the sitemap will have to be done manually.

Submitted URL seems to be a Soft 404

Soft 404s are a little more confusing. Google considers a page to be a soft 404 when the page returns a 200 status code but is an empty page. In other words, the page does exist on the site but has little to nothing on it. For SEO purposes, these types of pages shouldn’t exist on sites anyways, so it’s best to remove all empty pages.

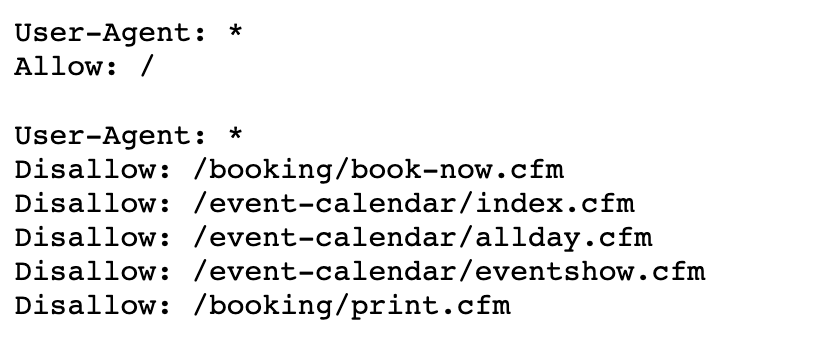

Submitted URL blocked by robots.txt

Your robots.txt file tells Google which pages you want them to ignore when crawling your site. This is used to limit the amount of crawler requests and prevent your server from getting bombarded. These pages are generally pages you don’t want Google to index, which is why Google sends this notification. This lets you know that a URL that was submitted in your sitemap is also in your robots.txt file, so you’ll need to decide whether or not it’s a page you want Google to look at. If it is, you’ll want to remove it from your robots.txt file. If it’s not, you’ll want to remove it from your sitemap.

Indexed, though blocked by robots.txt

I know this one sort of contradicts the last one and is the most confusing error you’ll get from Search Console. While a robots.txt file typically contains pages you don’t want Google to crawl or index, Google will sometimes take it upon itself to index pages from it anyway. This notification is basically Google’s way of telling on itself for doing so. If you don’t want a page to be indexed, the only true way to ensure it doesn’t happen is to add a noindex tag to the page. The robots.txt file won’t always cut it. Ideally, you’ll want to have a noindex tag on that page and the URL in the robots.txt file if you don’t want the page indexed or crawled.

Redirect error

Redirect errors usually occur when a page has been redirected too many times or is redirecting to a 404ing URL. These are fairly common when URLs have been changed multiple times. It’s always a good idea to perform regular maintenance on your .htaccess file to ensure redirects are up-to-date and you aren’t redirecting to redirects and creating redirect chains.

Server error (5xx)

This error has to do with the server where your site is hosted and not the site itself. If you check your site (or the particular page the error was returned on) and see that it is working, it just means the server was momentarily down while Google was crawling the site. If this is the case, the error will be resolved the next time the site gets crawled. If the site or page is down when you check it, there is a more serious issue with your server that will need to be resolved.

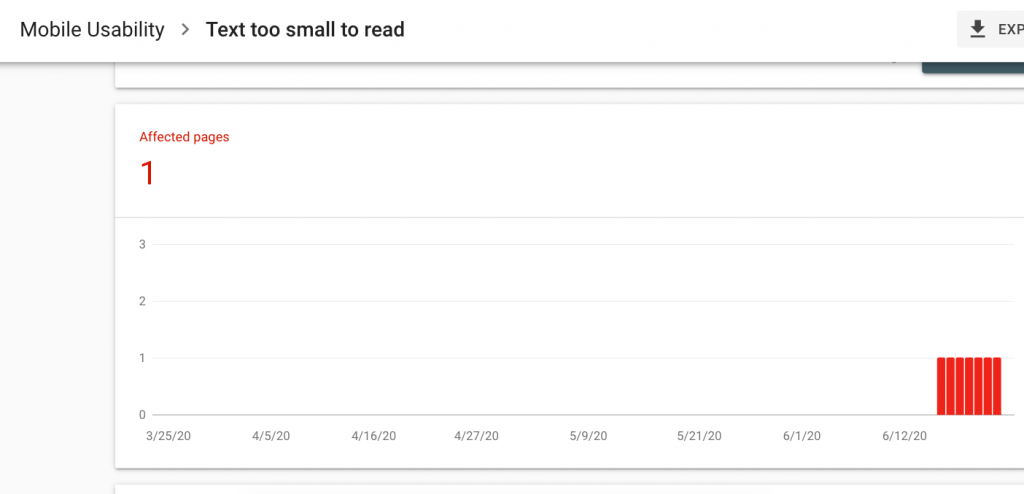

Mobile Usability

Text too small to read

Search Console can be extremely vague when it comes to some of these mobile usability issues… While Google doesn’t give you a minimum font size to go with, 16px on mobile tends to be best for avoiding usability issues and for SEO. If you want to make sure your page is considered mobile-friendly by Google, you can run the page through Google’s mobile-friendly test.

Clickable elements too close together

This one can be hard to diagnose as Google isn’t clear which elements are too close together. If your page only has a couple of clickable elements, it’s usually pretty simple to determine what Search Console is talking about. But for pages full of clickable elements, it’s best to keep them all at least 8px apart.

So all of this to say, no need to panic next time you get an email with one of these errors from Search Console.

While these aren’t something to brush off and we do recommend fixing the errors as they arise and keeping your website as “healthy” as possible, they’re rarely as problematic as they seem.

866.249.6095

866.249.6095